Analog engineers of my generation had a common hero in Bob Pease (1940-2011). Bob was a brilliant analog solid state circuit designer as well as a reductionist. Bob embodied the principle of "Keep it simple, stupid!" (KISS). He wrote a monthly article for Electronic Design magazine titled Pease Porridge, which he always titled, “What’s all this about [topic of the month], anyway?” It was Bob’s friendly reminder to the rest of us regarding the importance of KISS in engineering. I’m no Bob Pease, but here is my tribute to my hero.

Understanding Jitter

Let’s start by understanding jitter: its definition, constituents, and practical limits.

The formal definition of jitter is “the deviation of threshold crossing times from their expected positions.” That’s it. I expect my signal edges to cross my decision threshold at certain times, and if the actual crossings occur early or late, that’s jitter.

Types of Jitter

Total jitter is a composite of several constituents, which can be grouped into the following two major categories:

Correlated

Correlated jitter is jitter caused by properties of the signal itself. It has two sub-constituents:

- Inter-symbol interference, which is caused by the single bit response in one unit interval (UI) bleeding into those adjacent to it

- Duty cycle distortion, which is caused by asymmetries in the output driver pull-up and pull-down circuitry.

Uncorrelated

Uncorrelated jitter is jitter caused by things having nothing to do with the signal itself. It is most typically divided into the following two sub-constituents:

- Periodic jitter, which is caused by nearby clock sources and has a very narrow spectral signature

- Random jitter, which functions as the catch-all bucket for everything that can’t be identified as periodic. This so-called “random” jitter is typically modeled as having a Gaussian probability distribution function (PDF), for reasons we’ll discuss in short order. The immediate takeaway is just this: assuming this component of jitter to be Gaussian implies an assumption that it is unbounded. (A Gaussian PDF never reaches zero.)

Does this assumption of unbounded jitter seem reasonable to you? That is, would you agree that there is some non-zero probability that a threshold crossing expected at time t, could occur at: t + Infinity?

Physical Source of Jitter

In trying to understand the apparent quandary posed by the question above, it helps to understand the physical source of jitter. There are, of course, two theories:

Jitter is a direct, or primary, phenomenon. This would imply that the aggressors are actually pushing the victim left or right, that is, advancing or delaying time for the victim. But how could an aggressor directly manipulate time as experienced by the victim? That would require that the aggressor and victim be moving relative to each other at nearly the speed of light. And we know that that’s never going to be the case, at least in any design scenario that we’re interested in. So, it must be that…

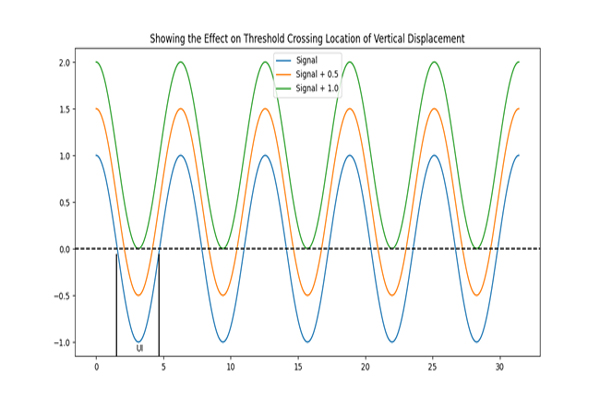

Jitter is an indirect, or secondary, phenomenon. That is, the aggressors are pushing up and down on the victim and, as a result, its threshold crossings are moving left and right because the victim’s edges are not perfectly vertical. This is illustrated in Figure 1. This explanation is much more plausible, because we know the electromagnetic field obeys superposition, which implies that voltage noise is additive at all points in space and time.

Figure 1. A depiction of how vertical displacement due to noise creates jitter.

Figure 1. A depiction of how vertical displacement due to noise creates jitter.

True Bounds of "Random" Jitter

Now, given our new understanding of the physical source of jitter, can we establish some bounds to this supposedly unbounded process? Yes, we can. This is illustrated in Figure 1.

Note the following two features of the figure. Firstly, the bounds of our random jitter are approximately +/-UI/2, which is quite satisfying to our intuition. Intuitively, it seems that, no matter the severity of the perturbance, any edge threshold crossing ought to be confined in time to a period equal to the unit interval and centered about the expected crossing time. And that is precisely what we see.

Secondly, the threshold crossings don’t jitter independently. Rather, they travel in pairs oscillating towards and away from each other about some stable middle point. If one crossing arrives late, for instance, then the two adjacent to it will tend to arrive early. The result is that the sum (or average, if you prefer,) of this random jitter is quite close to zero. That’s very comforting, because it would be quite difficult to swallow the proposal that random jitter is somehow magically able to lengthen or shorten the propagation delay of our channel.

Also note that we are not confining the voltage noise to being finite or bounded. (We can’t, because we can’t prove that there aren’t an infinite number of independent voltage sources operating in the universe, and we believe in superposition of the electromagnetic field.) What we are doing, however, is noting that the relationship between voltage noise and jitter is not linear. It is, in fact, bounded, due to the finite amplitude of the victim, as illustrated in Figure 1.

Modeling Random Jitter

Given what we now understand, regarding the real bounds of random jitter, why do we model it with a Gaussian PDF, which we know to be unbounded?

Survey of Probability Distributions

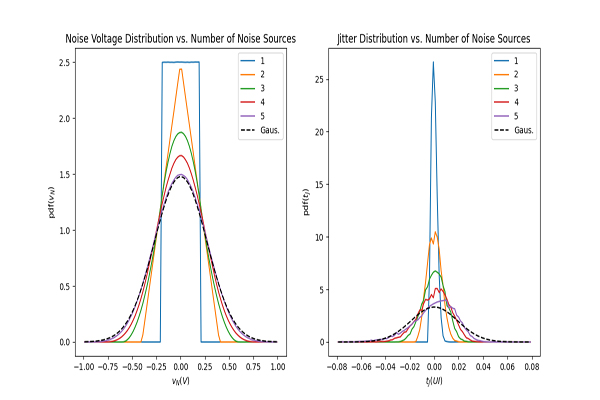

Before we state the answer to the question above, let’s do a very illuminating experiment. Let’s see what happens to the composite probability distribution of several unrelated noise sources added together, each having a uniform distribution. For reference, we’ll also include an actual Gaussian PDF.

Figure 2. A depiction of voltage noise and jitter distributions as more independent noise sources are added.

Figure 2. A depiction of voltage noise and jitter distributions as more independent noise sources are added.

Next, looking at the jitter distribution, we see the same trend toward a Gaussian shape as the number of independent noise sources is increased. (This is not actually surprising, as the mapping between noise voltage and jitter is just the inverse of the slope of the sinusoid at the threshold crossing, which is fairly constant near zero, yielding a nearly linear mapping.) However, the fit isn't nearly as clean as in the noise voltage case. Unfortunately, this is fairly typical of jitter measurements taken in "bit-by-bit" fashion, which is why the extrapolation bit-by-bit measurements to very low bit error ratios is so fraught with risk.

Why We Don't Care About Unboundedness

So, why don’t we care that we’re representing a bounded property (“random” jitter), using an unbounded model (Gaussian PDF)? Because, for any reasonably designed link, the value of our Gaussian PDF out at +/-UI/2 is so small as to be well below our threshold for concern. The value out at those extremities is so low that to worry about differentiating it from zero is pointless. And, as we saw in Figure 2, after even just a few independent noise sources have been considered, the Gaussian PDF is an excellent model of our actual distribution in our region of interest.

What About Clock PPM?

One thing we haven’t discussed, which does apply a direct perturbance on relative time measurements, is the slight disagreement between the Tx and Rx clock frequencies, typically measured in parts per million (PPM). In fact, if the Rx were to operate in an open loop fashion, not tracking the clock frequency used by the Tx, then we could have jitter approaching infinity, as the slight difference in frequency between the Tx and Rx clocks caused associated edges of the two to drift further and further apart as time advanced. (This would be referred to as “wander” by those with experience in the craft, alluding to the extremely low frequency nature of this phenomenon.) However, to my knowledge, it is impossible to build a reliable serial communication link without some sort of Rx tracking of the Tx clock frequency (and phase). So, the point is moot.

Now, a very interesting question to entertain is, “How does the exact nature of our Rx frequency/phase tracking affect the nature of observed jitter at the Rx slicer (decision point)?” That might be an excellent topic for a follow-up piece.

Conclusion

While the point may be too academic for most practicing serial link designers to concern themselves with, it is nevertheless comforting to know that our intuition that unbounded jitter simply shouldn’t be possible is, in fact, correct. Although, for any reasonably designed link, the difference between the bounded and unbounded models becomes moot, it is nevertheless reaffirming that our engineering instincts are in line with the actual behavior of the physical world we’re modeling.